AI Bias in Healthcare: A Silent Epidemic

As Dr. Elena Torres, a seasoned cardiologist, scrolled through patient records during a shift at Riverside Medical Center, she stumbled upon an alarming discrepancy. A prominent AI tool, designed to predict patients’ risk for heart disease, had flagged nearly all of her White patients as high risk but missed nearly 40% of Black patients with chronic conditions. “It’s like the AI has a blind spot for people who don’t reflect the dataset it learned from,” she said, frustrated. This is not an isolated incident but rather a growing concern within healthcare, revealing the deeper ramifications of AI bias—a complex web woven from data, algorithms, and human assumptions.

Bias: A Threat to Equity in Healthcare

In the sphere of healthcare, “bias” can be defined as any systematic and unfair difference in how predictions are generated, producing disparities in care delivery. Researchers have highlighted that disparities can lead to unequal benefit or harm for patients, often exacerbating existing healthcare inequities. Dr. Omar Jamal, a leading AI ethicist, emphasizes the urgency: “When AI fails to see certain groups, it doesn’t just misdiagnose; it actively reinforces a broken system.”

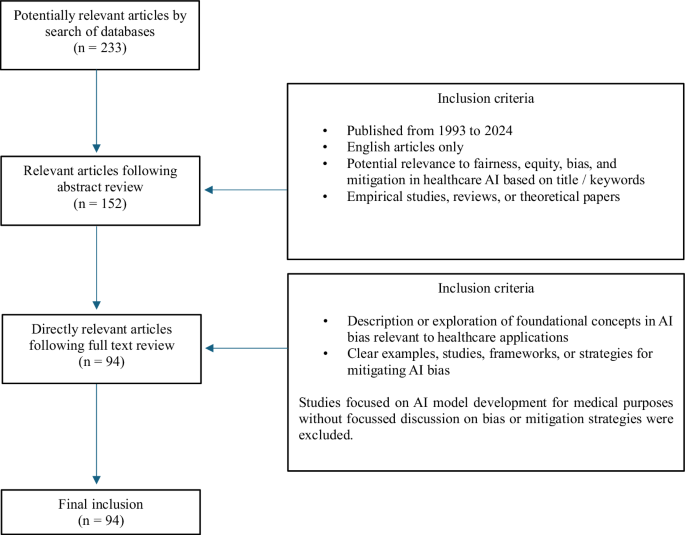

Methodology: A Deep Dive into AI Bias Literature

We embarked on a comprehensive review of literature focusing on AI bias in healthcare, examining English articles published between 1993 and 2024. Utilizing databases like PubMed and Google Scholar, our method employed Boolean operators to comb through millions of articles, merging keywords such as “AI bias,” “healthcare disparities,” and “algorithmic bias.” In total, our exploration yielded 233 potentially relevant articles, eventually narrowing down to 94 after rigorous screening. This painstaking effort was guided by the need to capture a balanced view, integrating foundational perspectives with contemporary discussions on bias.

Understanding Bias Types

- Selection Bias: Occurs when certain groups are chosen over others, skewing results.

- Representation Bias: When training data lacks diversity, thus failing to generalize effectively.

- Confirmation Bias: Developers may unconsciously favor data supporting their pre-existing beliefs.

The phrase “bias in, bias out” rings particularly true in the healthcare AI context, emphasizing that models trained on flawed data often yield flawed outcomes. Various studies, including one by Dr. Anita Chen, found that up to 50% of healthcare AI models have a high risk of bias, often due to imbalanced datasets or weak algorithm design.

Nuances of Fairness, Equality, and Equity

The concepts of fairness, equality, and equity are pivotal to healthcare AI ethics. Fairness encompasses distributive justice, while equality aims for equal treatment. Equity, however, acknowledges that some groups require additional support to achieve comparable health outcomes. Navigating these distinctions is critical, as blanket policies may inadvertently deepen existing disparities.

“It’s essential to build AI models with an awareness of social dynamics,” says Dr. Lila Schwartz, a sociologist specializing in technology and health. “Only then can we hope to achieve true equity rather than a mere illusion of fairness.”

Human and Algorithmic Biases: A Double-Edged Sword

Biases can originate from both human actions and algorithmic processes, particularly during the AI model lifecycle, which includes conception, data collection, algorithm development, and clinical deployment.

Human Biases

Many biases in healthcare AI stem from historical human perceptions and systemic inequities. Implicit biases influence how data is collected and interpreted. For example, a 2022 study indicated that data collection activities at clinical sites often lack sufficient representation from minority groups. This limitation perpetuates existing healthcare inequalities, echoing through generations.

Algorithmic Biases

Algorithmic biases emerge from inappropriate feature selection or techniques employed during model training. In a landmark study by Dr. David Ng, researchers observed that algorithms developed with limited datasets often fail to reflect the complexities and diversities of human health, resulting in significant discrepancies in care quality across patient backgrounds.

Mitigation Strategies

To address these pervasive biases, a model lifecycle approach is vital, emphasizing the need for bias mitigation at various stages:

- Conception Phase: Assemble diverse AI teams to minimize implicit biases during model design.

- Data Collection Phase: Utilize a variety of data sources to ensure demographic representation.

- Algorithm Development Phase: Engage in rigorous validation processes inclusive of diverse population subsets.

- Deployment Phase: Implement human-in-the-loop strategies to maintain oversight over AI recommendations.

Dr. Torres shared her hope that through ongoing education and continual refinement of AI systems, healthcare professionals can counteract biases: “We need models that not only predict but also reflect the entirety of our patient population.”

Future Directions: A Collaborative Approach

As healthcare continues to evolve alongside advancements in AI technologies, the role of Diversity, Equity, and Inclusion (DEI) principles becomes paramount. Collaboration among policymakers, healthcare professionals, and researchers is vital to fostering a responsible AI framework that prioritizes equitable care for all. As Dr. Jamal aptly states, “We stand at a crossroads: either we build a system that reflects our society in all its complexity or we risk perpetuating a cycle of discrimination.”

With the stakes this high, it’s not merely a challenge for AI developers; it is a societal responsibility that calls for immediate action, continuous dialogue, and the relentless pursuit of fairness in healthcare. By aligning technological advancements with ethical considerations, we can forge a future where AI serves as a tool for equity rather than a perpetuator of bias.

Source: www.nature.com